“Any sufficiently advanced technology is indistinguishable from magic.”

— Arthur C. Clarke, author of 2001: A Space Odyssey

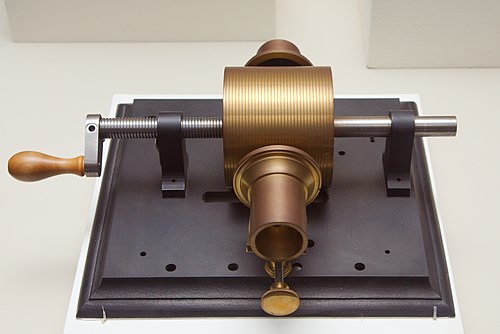

In 1877, Thomas Edison designed the first machine that could record and play back sound —

He experimented with a diaphragm which had an embossing point and was held against rapidly-moving paraffin paper. The speaking vibrations made indentations in the paper. Edison later changed the paper to a metal cylinder with tin foil wrapped around it. The machine had two diaphragm-and-needle units, one for recording, and one for playback. When one would speak into a mouthpiece, the sound vibrations would be indented onto the cylinder by the recording needle in a vertical (or hill and dale) groove pattern. Edison gave a sketch of the machine to his mechanic, John Kruesi, to build, which Kruesi supposedly did within 30 hours. Edison immediately tested the machine by speaking the nursery rhyme into the mouthpiece, “Mary had a little lamb.” To his amazement, the machine played his words back to him. [i] When his mechanic heard the recorded words he famously exclaimed, “Gott in Himmel!” (“God in heaven!”)

Edison took his new invention to the offices of Scientific American in New York City and showed it to staff there. As the December 22, 1877, issue reported, “Mr. Thomas A. Edison recently came into this office, placed a little machine on our desk, turned a crank, and the machine inquired as to our health, asked how we liked the phonograph, informed us that it was very well, and bid us a cordial good night.” Interest was great, and the invention was reported in several New York newspapers, and later in other American newspapers and magazines.[ii]

After the phonograph came radio. Then television. Then cassette players, VHS, CDs and DVDs.

The iPod arrived in 2001. It was the size of a deck of cards and could retain 1,000 songs. The first time a friend showed me his, he said, “It feels like the music is coming from God.”

Today, the latest source of technological amazement is AI.

I had read Anthropic’s Claude was developed with a strong focus on safety and ethics, so I began using it. Every week when I think my blog post is finished, I ask Claude to proofread it. Within seconds, it comes back with notes about clerical errors, misspellings, phrasing, occasional questions about facts and often an encouraging word.

Last week my post included spiritual reflections I had after walking a labyrinth. I asked Claude to proofread it, and it responded with some corrections. But the more I thought about it, I wondered if this kind of personal knowing was something AI could ever experience. I took the last paragraph:

“Yearning, Sorrow, Sweetness. Moving On, Holding On, Opening. People complete the journey and disappear, as I will someday. In such moments of awareness, there is wonder.”

…and added one sentence…

“Yearning, Sorrow, Sweetness. Moving On, Holding On, Opening. People complete the journey and disappear, as I will someday. I don’t think AI chatbots will experience such feelings and think such thoughts, but we human beings can. In such moments of awareness, there is wonder.”

I asked Claude for an opinion about the added sentence. A few seconds after hitting the Enter key, I got this response:

“It’s a thought worth sitting with, because it cuts both ways.

The case for including it: It does sharpen the specifically human quality of the experience — the mortality, the longing, the bittersweet awareness. And given how much conversation there is right now about AI, it might resonate with some readers.

The case against: The piece is so beautifully self-contained and timeless in its imagery — labyrinths, oak trees, a cello on a hillside, the ancient themes of loss and love. The AI reference pulls the reader abruptly into 2026, and into a somewhat defensive or comparative posture, just at the moment when you’ve brought them to a place of quiet wonder. It risks deflating exactly the feeling you’ve just built.

There’s also a subtle irony worth considering: you’d be addressing the question of AI to me, an AI — and I’d gently push back on the certainty of the claim. Whether or not I “experience” anything is genuinely unresolved, philosophically speaking. So the sentence might inadvertently invite that debate into a piece that isn’t about that at all.

My honest suggestion: Leave it out. The final line — In such moments of awareness, there is wonder — is exactly the right landing. It’s earned, it’s still, and it’s universal. The AI sentence adds a claim but subtracts resonance.

That said, if the piece is specifically written for an audience you’ve been discussing AI with, or if that contrast is a theme in a larger body of writing, it could fit. Context matters.”

Reading this eloquent response gave me an eerie feeling. I could have whispered “Gott in Himmel!” but I don’t think Gott had anything to do with it. I could have said, “This feels like magic” but I know it’s not magic. It did evoke awe. With a continuously evolving “intelligence” like this available to us, where are we headed? I agreed with Claude’s recommendation and omitted the sentence.

I have gained great respect for Claude and will continue to use it . It can certainly express thoughts that seem fully human. But they are not. They don’t come out of the flesh and blood experience of a human life lived in all its complexity – they are a product of computational power. We human beings labor to make sense of our lives using our thoughts, feelings, intuitions, dreams, conversations, imagination and personal memories. It’s hard work; what we gain is hard-earned. It is through this labor we form our character.

[i] “History of the Cylinder Phonograph,” Library of Congress

[ii] Ibid.

Lead image: Edison’s first phonograph